How to analyze business performance in real-time - Automated Financial Modeling

“If you can’t measure it, you can’t manage it”

We often see that quote attributed to Peter Drucker, as a justification for measuring things. He not only never actually said that, but even spoke about the perils of measuring social aspects of the work.

But when speaking about hard numbers, such as company finances, I would say that the quote is a valid one. I would take it even further, and say that “what does not get measured automatically, does not get managed often enough.” As with any system, getting early warnings and understanding how the organization is performing, works much better if we do it in real-time.

In most companies, understanding the performance of the whole operation from a financial perspective is something that is done, in the best cases, periodically. A financial analyst will “crunch” the quarterly numbers, build the model in a spreadsheet, and put together a powerpoint for management to review.

There are several problems with this approach:

- Latency: a quarterly analysis means that with luck, we can catch today, a negative trend that could have started up to four months ago. Even worse, feedback on any corrective action will take another 3-month cycle to close the loop. We could measure a few metrics more often, but systems interdependence means we’ll probably break aspect B after trying to fix aspect A. We need to monitor the whole thing.

- Repeatability: spreadsheets are an excellent tool for sketching mathematical models, but businesses should not rely on the idiosyncrasies of individuals to build a financial tool that is almost impossible to verify, test and maintain. Spreadsheet risk in financial modeling is even a thing.

- Centralization: a cryptic spreadsheet plus an executive summary powerpoint means only a fraction of the organization will understand or even have access to the model. That is often by design, but a more distributed understanding of the business model means more people can make informed decisions or understand management directives.

To address those shortcomings, we wanted to design a real-time, easy-to-understand, but formal model of the whole organization. And we wanted it to be based on quality custom software fed with real-time information.

A significant advantage of having such a model is that evaluating different scenarios, policy changes, and decisions is a breeze: we simply plug-in the numbers and see the results.

Design approach

We wanted to:

- Have a visualization, in a single chart, that showed the performance of the whole organization, with as much detail as possible, yet remained clear enough to understand if we saw a positive or negative scenario and what were the causes of it.

- A system that pulled real-time information automatically from our other systems in place, so that minimal manual upkeeping was needed.

- A fail-safe mechanism that reduced the possibilities of flying blind due to errors in the model.

Whole system visualization

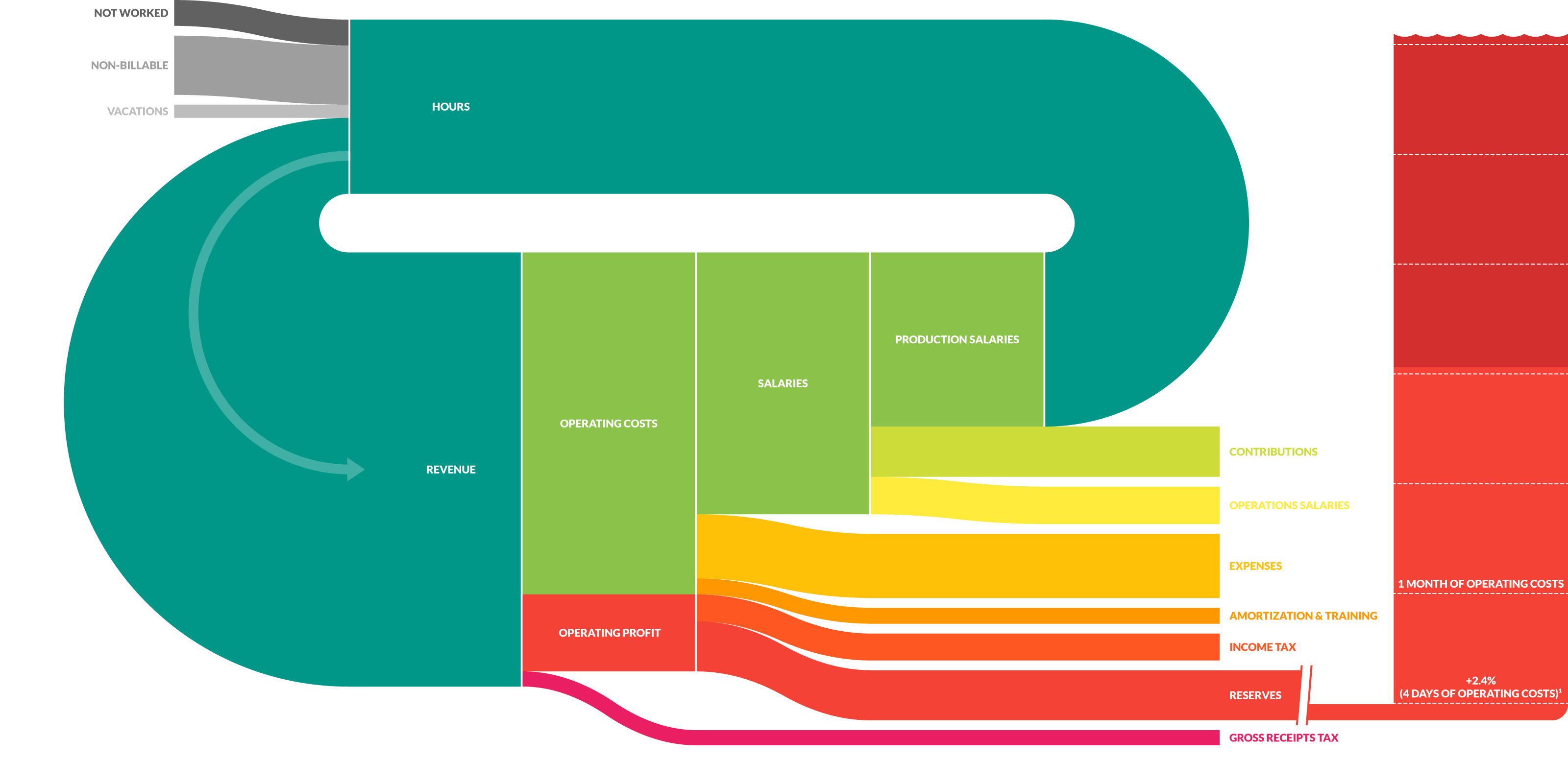

Flow of Income and Expenses

To depict the closed-loop nature of a business, we settled on a Sankey diagram. Sankeys are a great way of visualizing flows. As in many service organizations, our primary source of income is the time from our team that we bill against projects. The width of the “hours” ribbon in the chart depicts a month worth of available team hours. Some of those are not-worked (days-off of different kinds, less than 40hs/week reported, etc.), others are taken off as vacations, some are non-billable (internal projects, business development, training, team-building activities). The rest are billable hours, which are the primary source of income. In their transformation path to money, they get multiplied by a weighted-average fee. That ribbon then becomes our total monthly revenue, which then has a sequence of outgoing flows:

- Gross receipts tax: calculated as a percentage of the monthly revenue.

- Operating Cost: total monthly costs averaged over the last 365 days.

- Operating profit: income minus costs.

- Income tax: the tax on operating profit.

- Reserves: the net profit after taxes feeds into our reserves, plotted as a water tank. There’s a change of scale there to keep things balanced, and subdivisions in the reserves tank with the size of our total monthly operating cost. That way, we can quickly see how many months in reserves we have.

- Salaries: the most significant portion of our costs is salaries, which we then split into Operations Salaries and Production Salaries. The later are then the source of our billable hours, closing the loop.

Data sources

As mentioned, one of the goals was to have things running with as little manual input as possible. With that in mind, the model is pulling information in real-time from many of our other systems:

- Time utilization: this comes out of Brium, a product we developed for consulting agencies to track their time. We, of course, love it and use it internally. It tracks billable and non-billable hours, as well as time off.

- Income: using billable hours, and knowing which projects are assigned to it, the system pulls the current fee for each role and project and calculates the value of those hours. For currency exchange rates, we use a 3rd party service.

- Expenses: a monthly average over the last 365 days is calculated, pulling information from our accounting system (we use Quickbooks Online).

- Salaries: our salaries are internally shared, and this system pulls the current salaries from that same data source.

- Reserves: both cash and accounts receivable are obtained from our accounting system.

Dual redundancy as a fail-safe mechanism

To reduce the chance of costly mistaken decisions based on a faulty model, we created two parallel data pathways that check on each-other.

The first one is a forecasting model (more on that later) that runs a projection of what are feasible scenarios for the next cycle, based on estimated project throughput, revenue, budgeted costs, and a few macroeconomic variables. We use this to track how we are achieving our financial goals for the ongoing cycle.

The second one performs a reality-check on the first one, by measuring actuals using past-performance. Its output is a prototypical average-month that is represented as the Sankey mentioned before. This system is a monitor of real performance. Any expense that was not considered in the forecast will end up captured here; any income that was optimistically modeled and did not happen, will not be included.

Running the model

The model is continuously run, so every time anybody in the organization opens the internal page that displays the results, they can see up to date information.

The code that powers the model (proudly built on Crystal) works as a fantastic documentation of how we think of our financials and is transparently available for anybody to take a look. Any needed change can follow careful code-reviews, quality assurance, and version tracking.

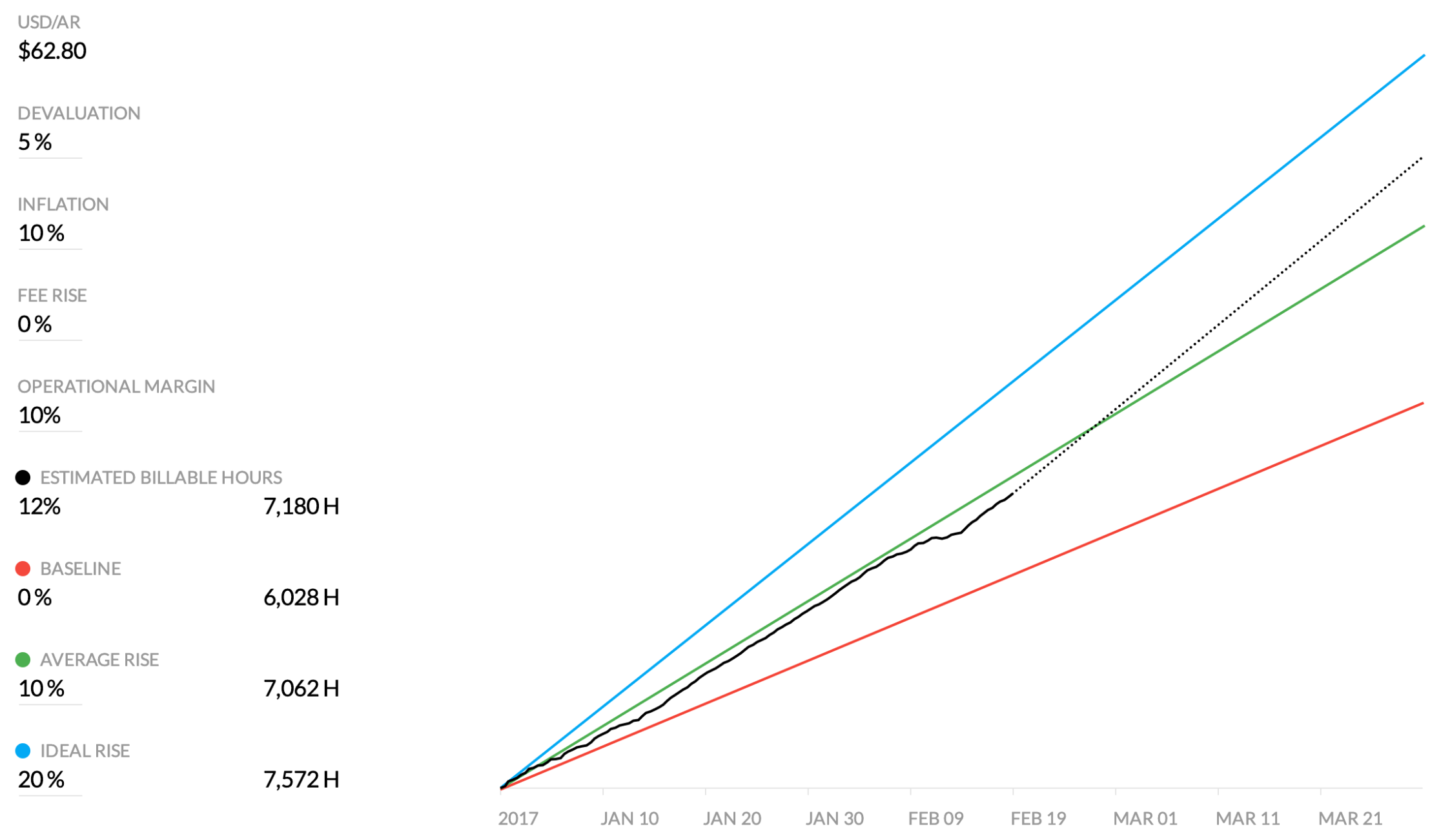

Scenario Forecasting

At the start of each period, we model a few different scenarios, such as a baseline one to maintain the status quo, a more favorable situation where our margins are higher, and an ideal scenario with a stretch goal.

Scenario forecasting

The model forecasts in real-time –as time passes and more data is available– which of the scenarios we are likely to hit. We also use this forecasting to analyze budget scenarios for our decentralized budgeting & expensing system.

Summary

We started working on this around 2014. The total effort was below 1600 hours, which we think is insignificant compared to the benefits we got out of it.

We have relied on these systems heavily to monitor the business performance both during good times and bad times. We have been able to maneuver and make informed decisions, knowing that we had a robust pulse measuring how all the key-variables were performing. On one occasion, the dual redundancy system helped flag the issue that we had failed to update a considerable expense (office rental), which resulted in the monitoring system always reporting lower performance than the forecasting system.

We would love to encourage other organizations to build their custom financial models using similar practices. Feel free to reach out; we’d like to help in any way we can.